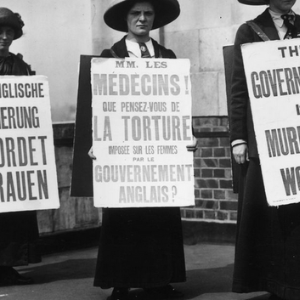

Why oh why do women have to continually fight for their fundamental rights? Why are we seen as lesser than the men around us? Why do we have to beg and plead for basic equality? I am oh so tired of having my rights stripped away by old, sexist white men who know nothing about the female anatomy. I don’t understand why we have to beg to be given autonomy over our bodies, and for equal pay, let alone respect. We work twice as hard as men just to prove ourselves and are still seen as less capable, too emotional, our place in society seen as the homemaker for husbands and children. A hundred years has passed since the women’s suffrage movement in America, and I fear not much has changed. I can’t believe we are reverting back to such dark times for women. Why, oh why?

Saturday, Jun 6, 2026